HIT Lab NZ Research

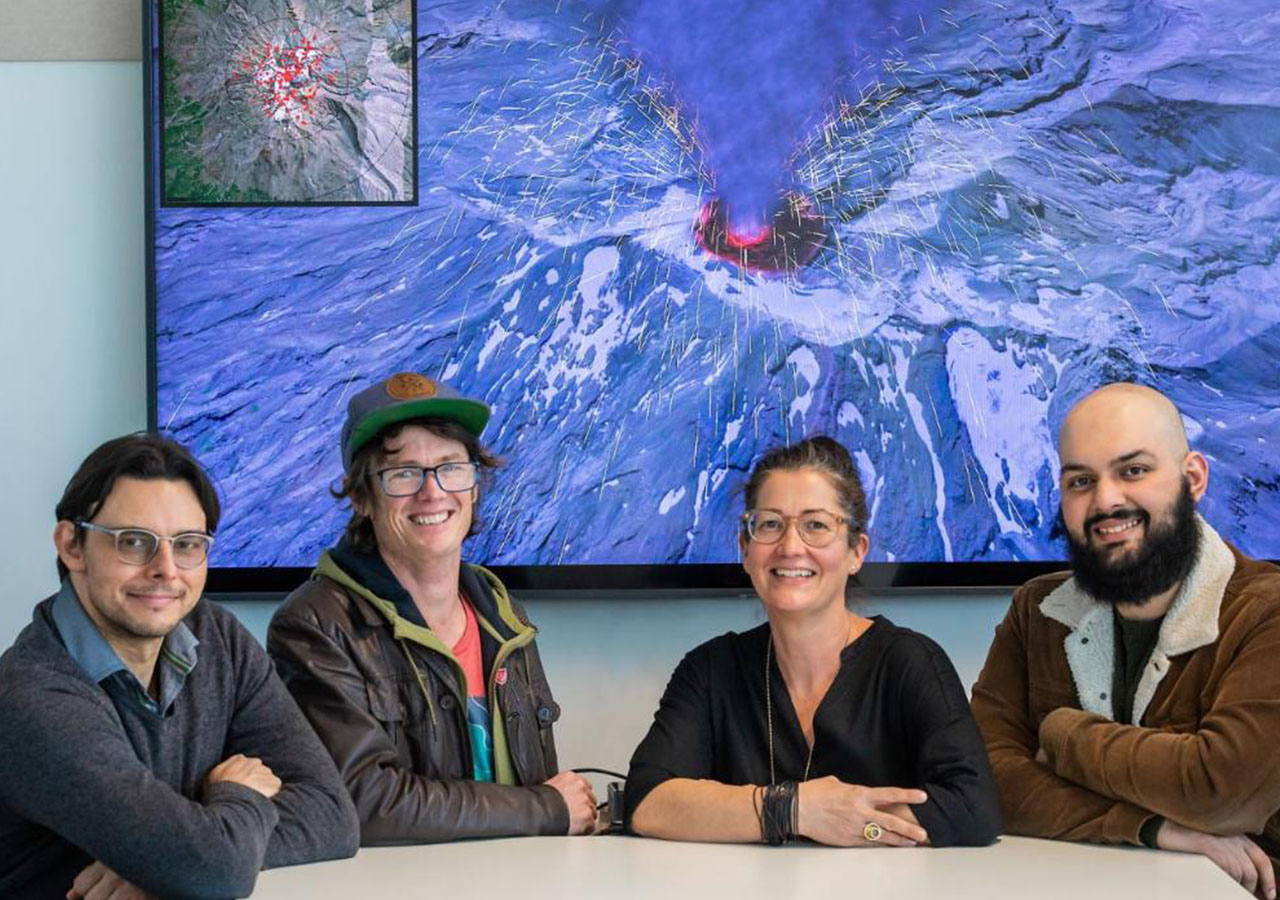

Human Interface Technology takes a human-centred approach to the analysis, design, and development of interactive technologies to improve the human experience and to meet users’ needs. As an applied research centre, most projects carried out at the HIT Lab NZ are funded by external organisations and/or research grants.

Research Expertise

Our research explores how people use these technologies with the end goal of improving their user experience. The HIT Lab NZ is an international, multidisciplinary group of researchers with expertise in:

-

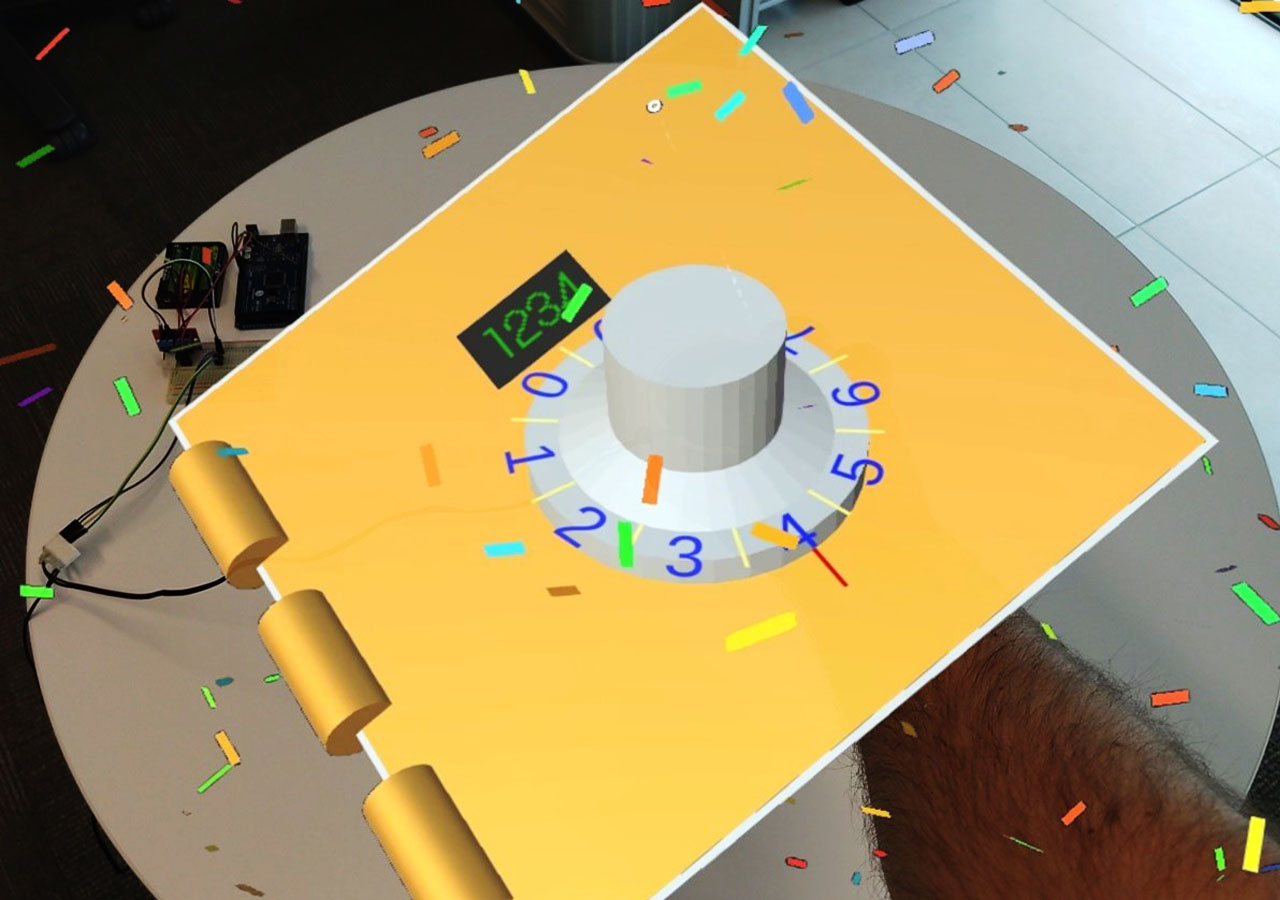

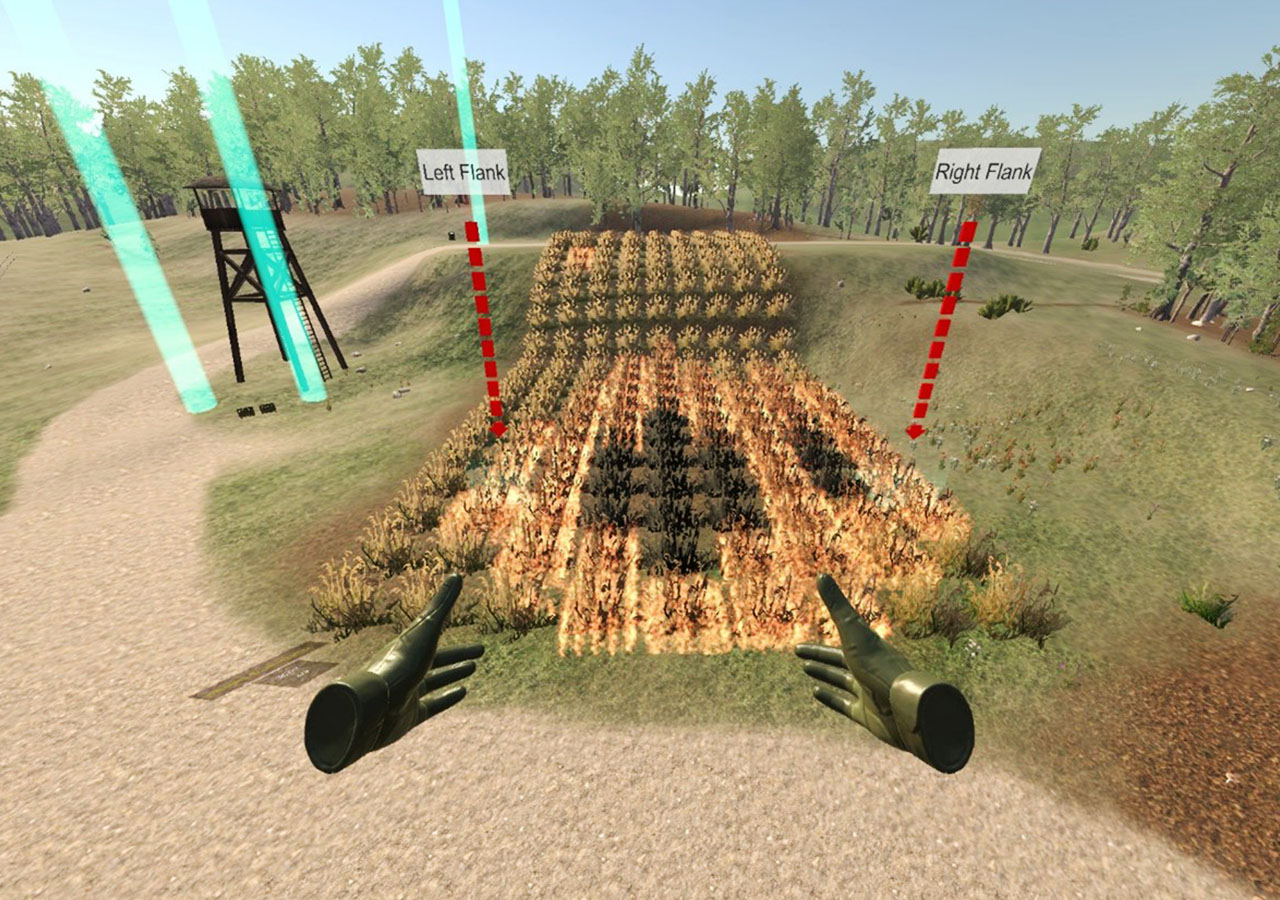

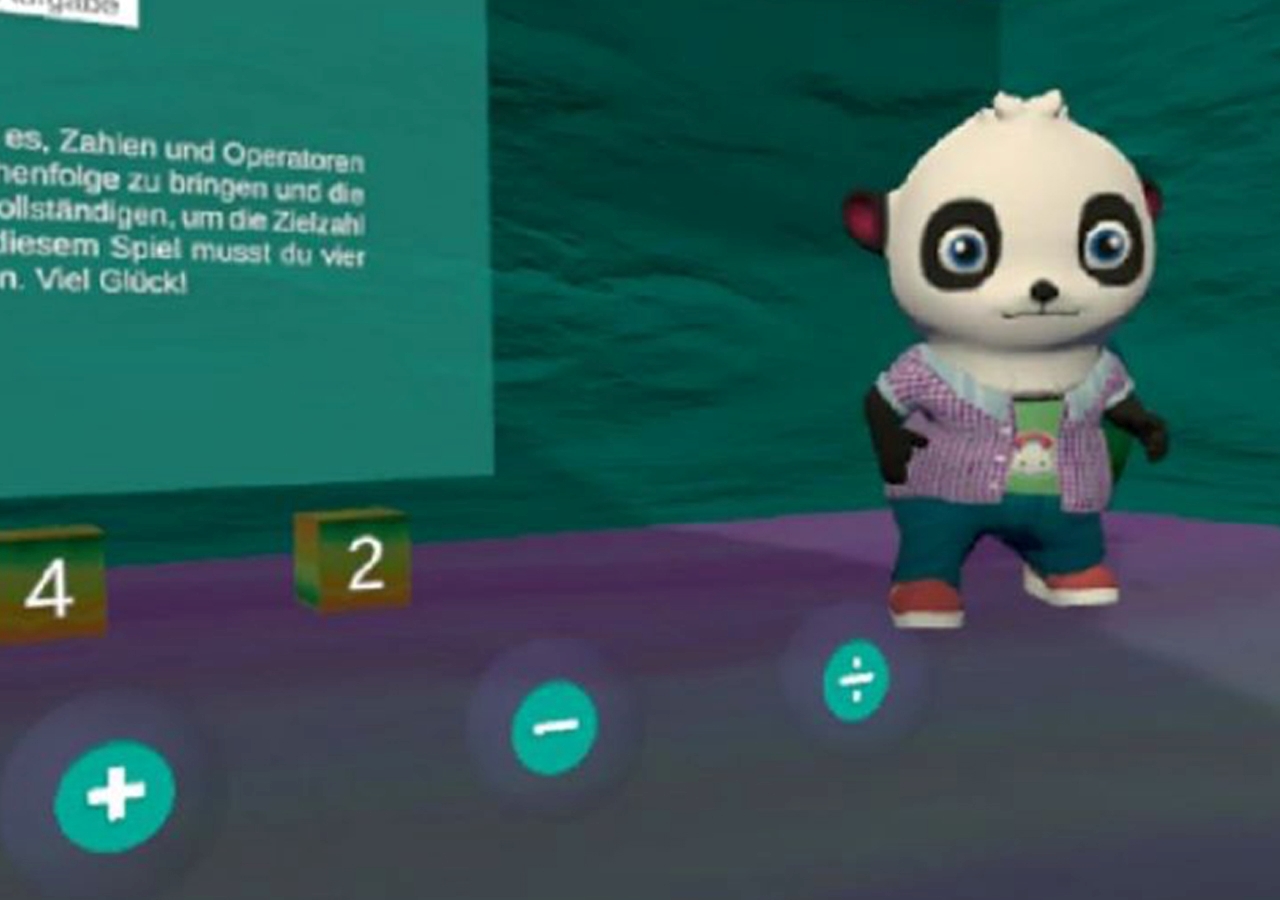

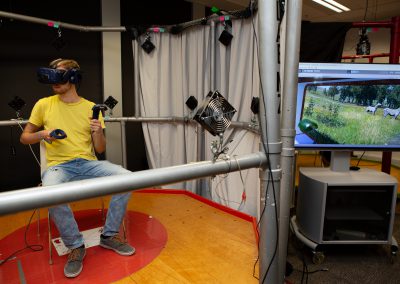

- Virtual Reality

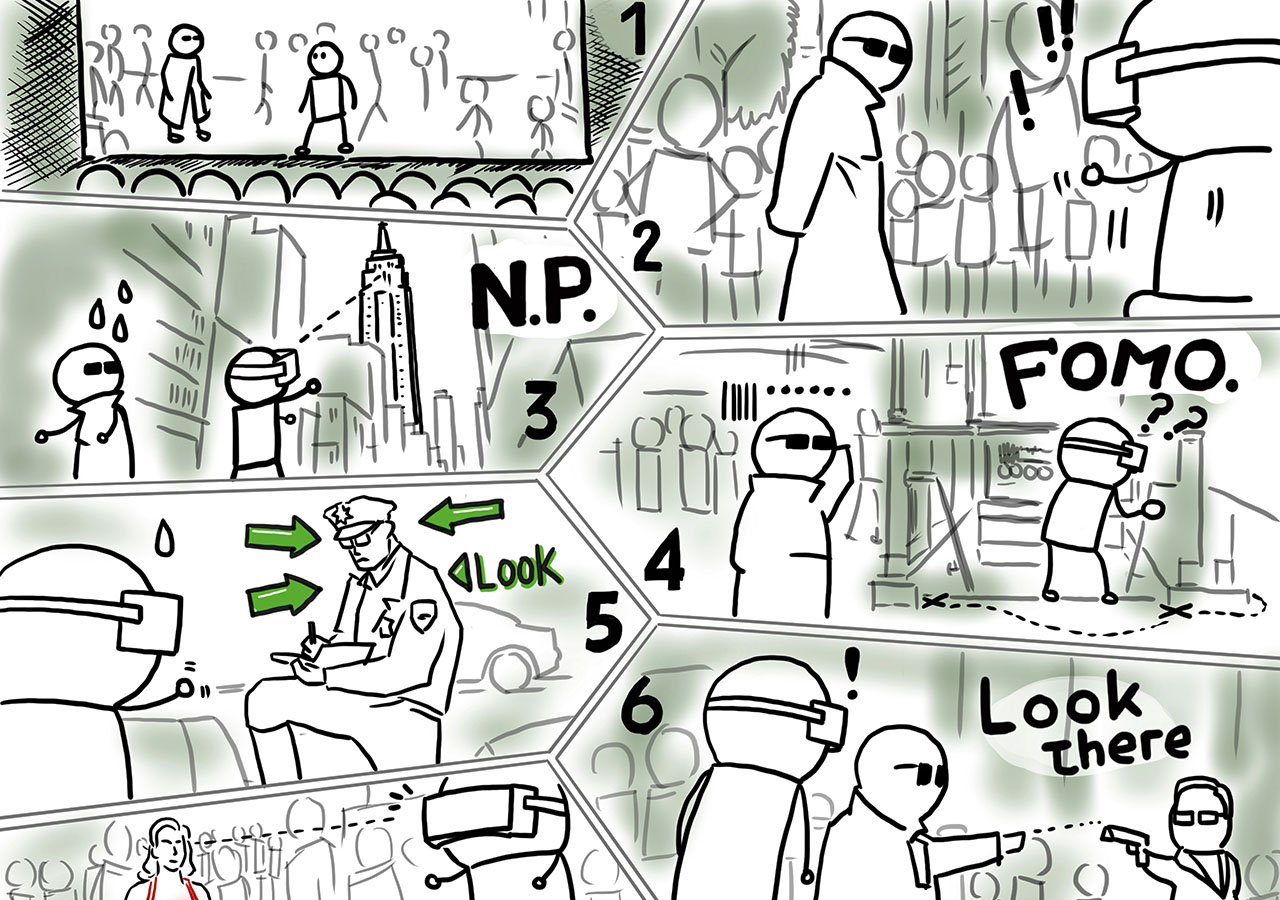

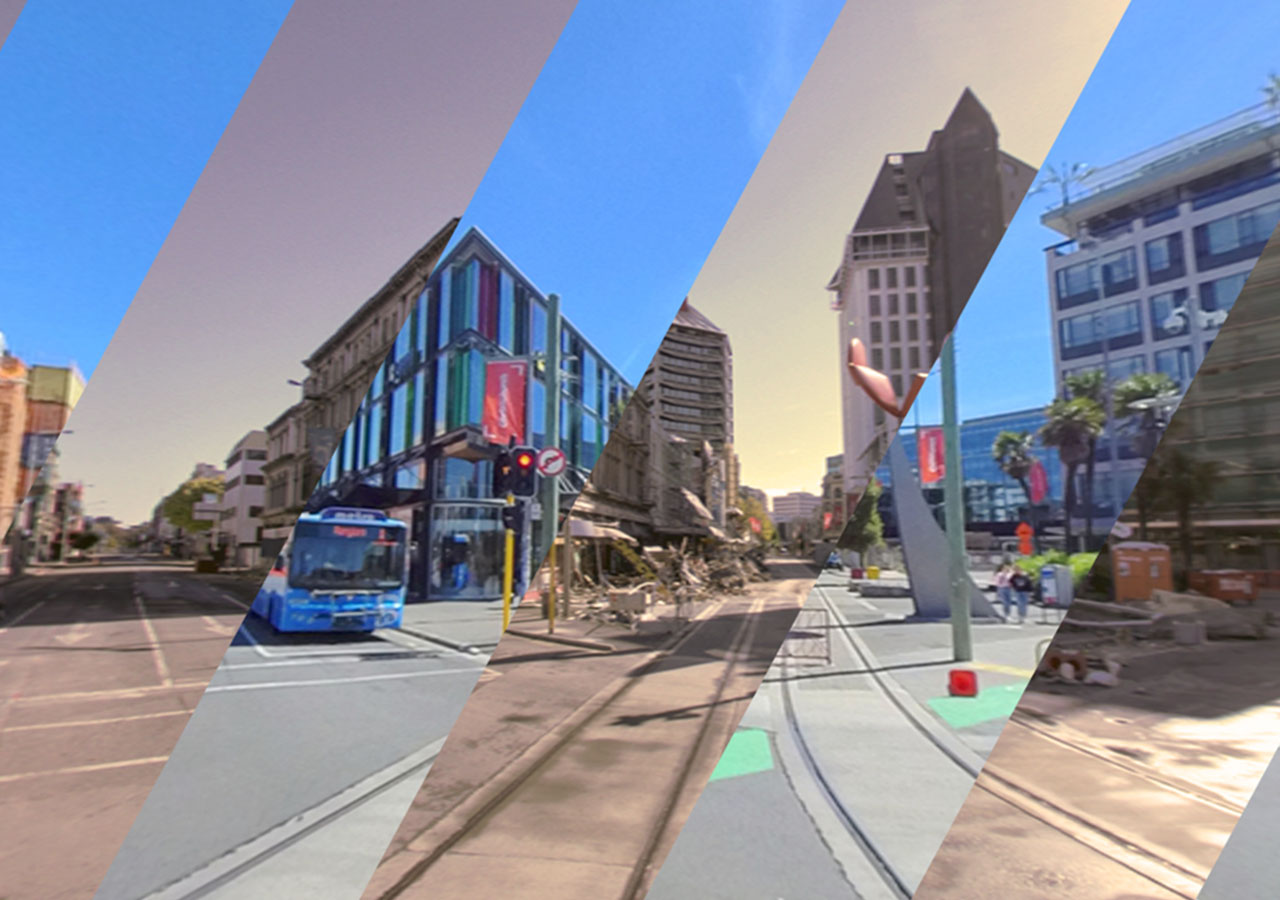

- Augmented Reality

- Applied Games

- Artificial Intelligence

- Haptics

Research Approach

We take a human-centred approach by first considering the people we are looking to support, the tasks they need help with, and the environment they will be in before we design technical solutions for them.

Potential Research Partners

Many research projects are in collaboration with external partners and embedded in real-world use cases.

HIT Lab NZ engages with partners from industry, academia, and government, both nationally and internationally. We use our expertise to collaborate with and consult for many sectors including:

-

- Health

- Education and training

- High-performance sports

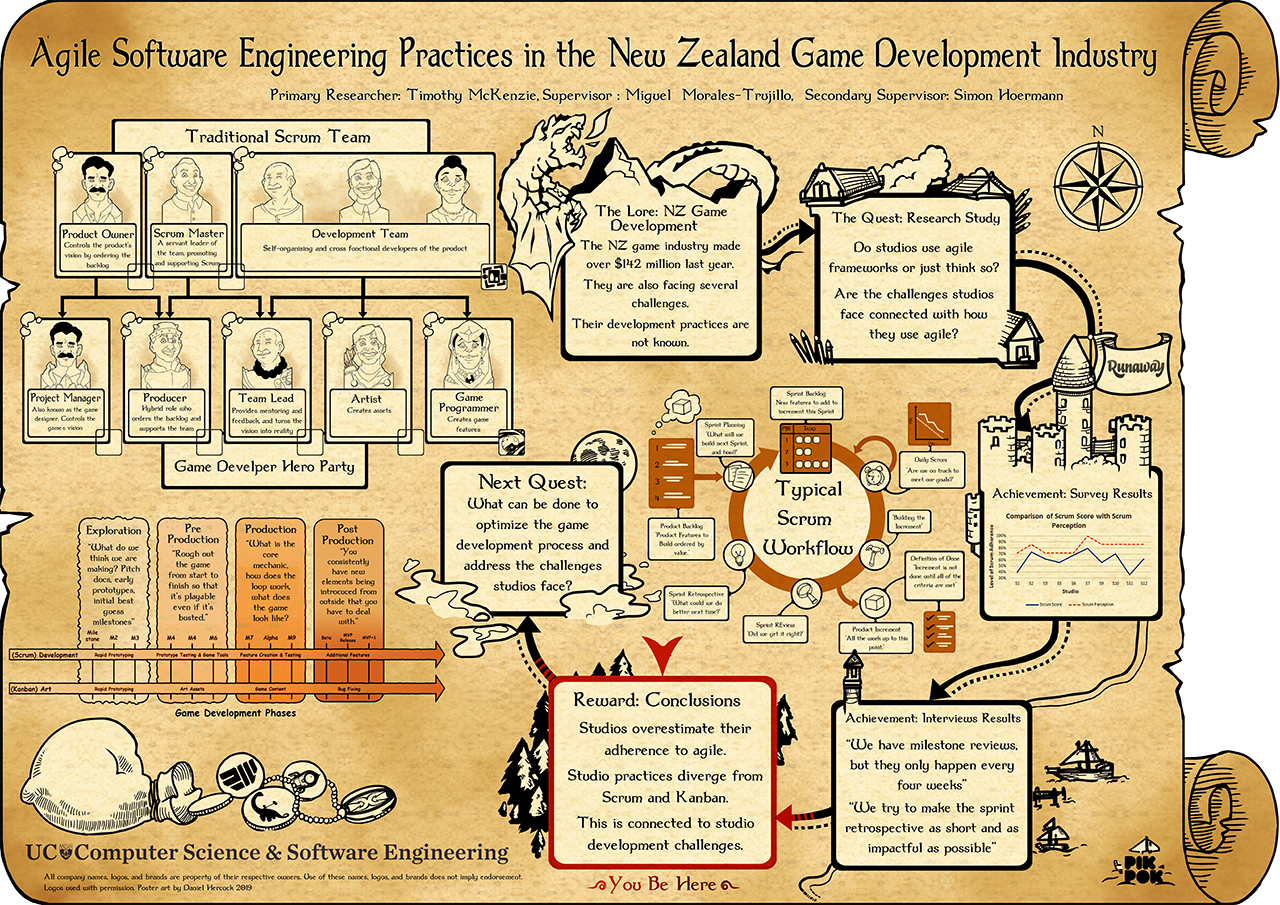

- Game development

- Disaster management

- Transportation

- Environment

- Construction

- Tourism

If you want to discuss potential research projects working with our students, collaboration opportunities, or consultancies:

-

- Read our Engaging with HIT Lab NZ fact sheet

- Email us at info@hitlabnz.org.

Research Publications and Outputs

Our staff and students regularly publish in a range of leading publications.

-

- Discover HIT Lab NZ Research Publications – both past and present.

- Search the UC Research Repository for Human Interface Technology PhD and Masters theses.